Music Overview¶

The music topic area is rich in opportunity for the creation of one piece production workflows. A computational representation of a piece of music can be created within the body of the document and then rendered as sheet music. The same musical object can be used to generate audio files that can be play the piece of music via an embedded audio player. Corpuses exist where a wide variety of musical scores have be represented using standard document formats such as MusicXML. This ready availability of scores makes is relatively easy to create materials around well known pieces, particularly if they are in the public domain.

If required, created audio files can be converted to waveforms that can be analysed using a variety of signal processing techniques.

In terms of creating learning materials, the one-piece flow approach provides a straightforward way of discovering or creating pieces of music, viewing the sheet music display, creating various visualisations over the music (for example, looking at pitch over the duration the piece), and rendering an audio version of the music that we can embed in the output document and play back and listen to directly.

Sheet music and the (embedded) audio files can be generated directly from the representation of a piece of music. As well as creating midi files, we can also create audio files (eg .wav.files). Using soundfounts, it is possible to create audio files using different instruments, where appropriate.

In terms of creating learning activities, learners can listen to provided pieces of music, as well as edit them and listen to the changes. Learners can also create their own pieces of music, from which they can directly generate audio and visual representations. This opens up the possibility for a wide range of hands on music analysis tasks in a notebook setting, where learners can annotate the materials as well as creating and responding to their own musical creations in a self-narrated way.

music21¶

The music21 package provides a wide ranging toolkit for computer-aided musicology.

Import packages from music21:

import music21

from music21 import *

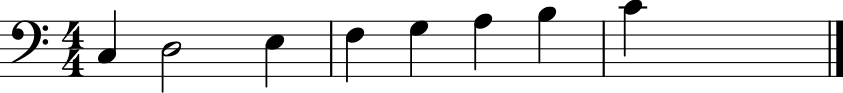

Create a simple score using TinyNotation¶

TinyNotation is a simple notation for representing music.

Whilst TinyNotation may be be appropriate for representing complex pieces of music, the straightforward syntax provides a very quick and easy way creating a simple piece of music.

from music21 import converter

s = converter.parse('tinyNotation: 4/4 C4 D2 E4 F4 G4 A4 B4 c4')

s.show()

Other visualisations of the music are also possible. For example, we can look at the pitch and note length:

s.plot()

<music21.graph.plot.HorizontalBarPitchSpaceOffset for <music21.stream.Part 0x104943e20>>

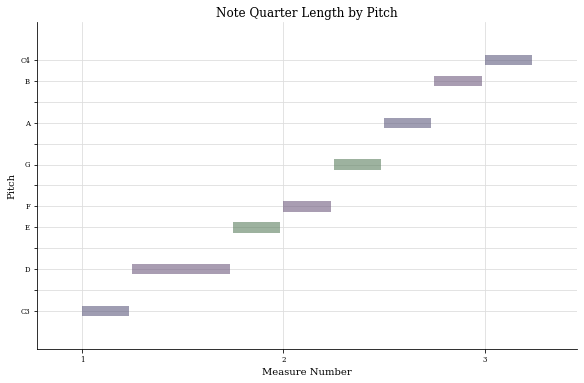

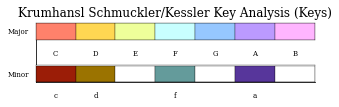

Or analyse the key:

s.plot('key')

<music21.graph.plot.WindowedKey for <music21.stream.Part 0x104943e20>>

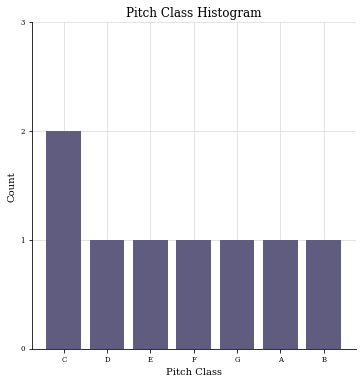

We can analyse the music in terms of pitch distribution:

s.plot('histogram', 'pitchClass')

<music21.graph.plot.HistogramPitchClass for <music21.stream.Part 0x104943e20>>

MIDI File Generation and Playback¶

When in a live, interactive Jupyter notebook, we can listen to the same piece of music creating a MIDI file from it and passing that file to an embedded audio player:

s.show('midi')

At the current time, it seems as if we need a web server to server the Jupyter Book page to load the midi player. A workaround to get this to work without the need for a webserver is to save a score as a Midi file, convert it to an audio file, and then load the audio file into an Jupyter Audio() player.

An advantage of this second approach is that we generate an .wav file audio asset that can be played by any music player. A downside is that the wav file may be quite large, and will also take time to create from the original Midi file.

fn_midi = s.write('midi', fp='demo.mid')

Converting Midi Files to Audio Files¶

We can convert a MIDI file to an audio file (eg a .wav file) using the fluisynth cross-platfrom command line application.

This application also requires the installation of a soundfont such as the GeneralUser soundfont.

# fluidsynth: https://www.fluidsynth.org/

#!brew install fluidsynth

# Requires a soundfont: https://github.com/FluidSynth/fluidsynth/wiki/SoundFont -> https://www.dropbox.com/s/4x27l49kxcwamp5/GeneralUser_GS_1.471.zip?dl=1

#http://www.schristiancollins.com/generaluser.php

#%pip install git+https://github.com/nwhitehead/pyfluidsynth.git

A utility function will handle the conversion for us.

#Based on: https://gist.github.com/devonbryant/1810984

import os

import subprocess

def to_audio(midi_file, sf2="GeneralUser/GeneralUser.sf2",

out_dir=".", out_type='wav', txt_file=None, append=True):

"""

Convert a single midi file to an audio file. If a text file is specified,

the first line of text in the file will be used in the name of the output

audio file. For example, with a MIDI file named '01.mid' and a text file

with 'A major', the output audio file would be 'A_major_01.wav'. If

append is false, the output name will just use the text (e.g. 'A_major.wav')

Args:

midi_file (str): the file path for the .mid midi file to convert

sf2 (str): the file path for a .sf2 soundfont file

out_dir (str): the directory path for where to write the audio out

out_type (str): the output audio type (see 'fluidsynth -T help' for options)

txt_file (str): optional text file with additional information of how to name

the output file

append (bool): whether or not to append the optional text to the original

.mid file name or replace it

"""

fbase = os.path.splitext(os.path.basename(midi_file))[0]

if not txt_file:

out_file = out_dir + '/' + fbase + '.' + out_type

else:

line = 'out'

with open(txt_file, 'r') as f:

line = re.sub(r'\s', '_', f.readline().strip())

if append:

out_file = out_dir + '/' + line + '_' + fbase + '.' + out_type

else:

out_file = out_dir + '/' + line + '.' + out_type

subprocess.call(['fluidsynth', '-T', out_type, '-F', out_file, '-ni', sf2, midi_file])

Pass the name of the sound file and create a .wav file:

#to_audio("alto.mid")

to_audio(fn_midi)

We can now embed an audio player to play the wav file:

from IPython.display import Audio

Audio(fn_midi.replace(".mid", ".wav"))

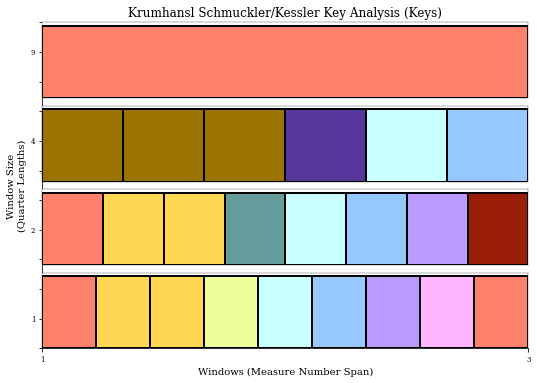

Constructing Music From Primitives¶

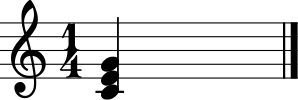

If we need more control over the music, we can construct things like chords directly:

from music21 import chord

c = chord.Chord("C4 E4 G4")

c

<music21.chord.Chord C4 E4 G4>

We can render the object visually as piece of music:

c.show()

Or listen to it:

#c.show('midi')

def audio_play(s, fn="dummy.mid"):

"""Save stream to midi, convert to wav, and load into audio player."""

fn_midi = s.write('midi', fp=fn)

to_audio(fn_midi)

return Audio(fn_midi.replace(".mid", ".wav"))

audio_play(c, "c.mid")

We can test for particular musical properties of the object:

c.isConsonant()

True

Access to Scores¶

The music21 includes a wide corpus of scores that we can work with without having to download any additional files:

from music21 import corpus

corpus.getPaths()[:3]

[PosixPath('/usr/local/lib/python3.9/site-packages/music21/corpus/airdsAirs/book1.abc'),

PosixPath('/usr/local/lib/python3.9/site-packages/music21/corpus/airdsAirs/book2.abc'),

PosixPath('/usr/local/lib/python3.9/site-packages/music21/corpus/airdsAirs/book3.abc')]

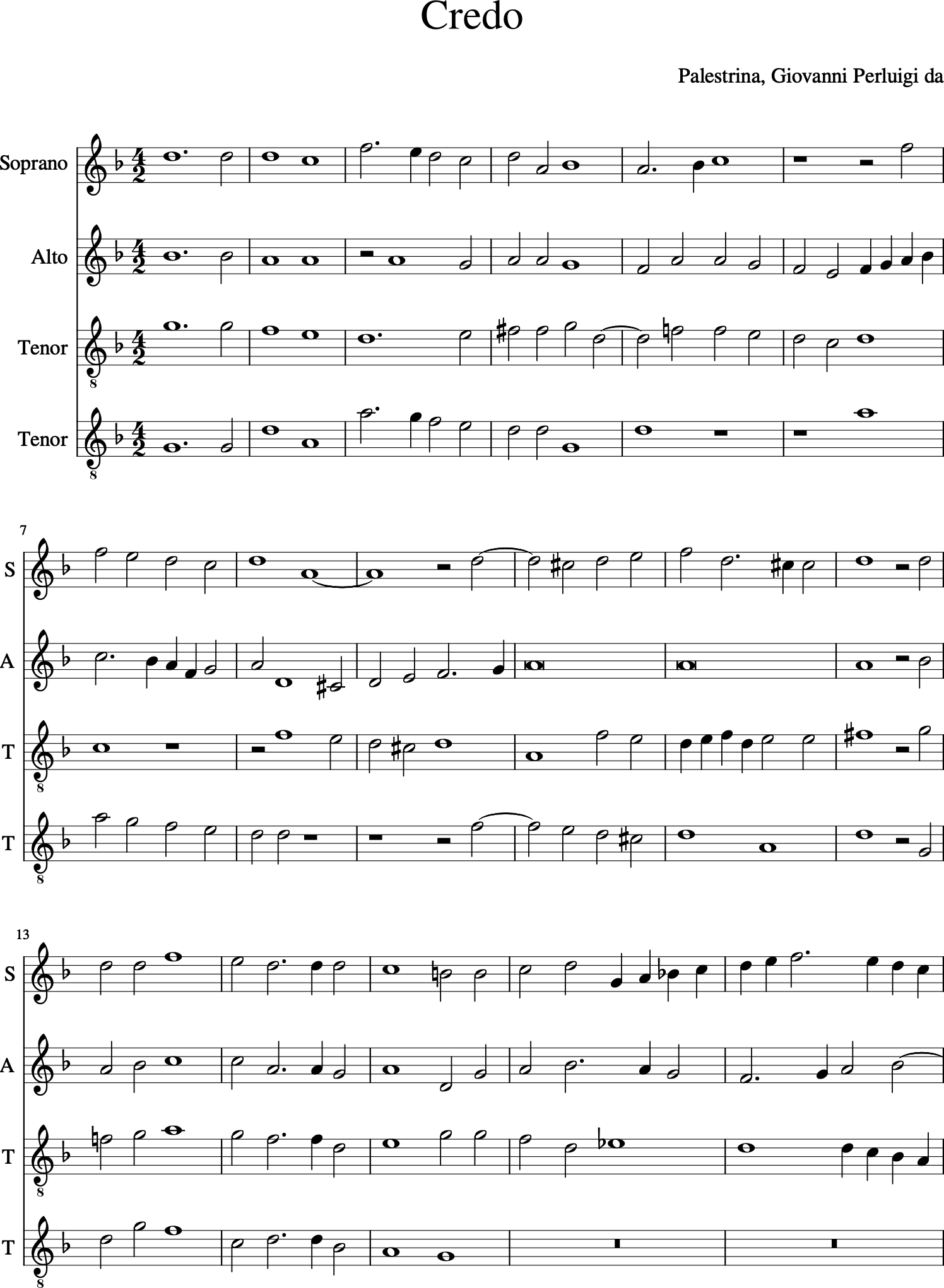

Select a random piece:

import random

selected_piece = random.choice(corpus.getPaths())

p = corpus.parse(selected_piece)

p.show()

Metadata is associated with each piece that we can extract directly:

p.metadata.title, p.metadata.composer

('Crucifixus', 'Palestrina, Giovanni Perluigi da')

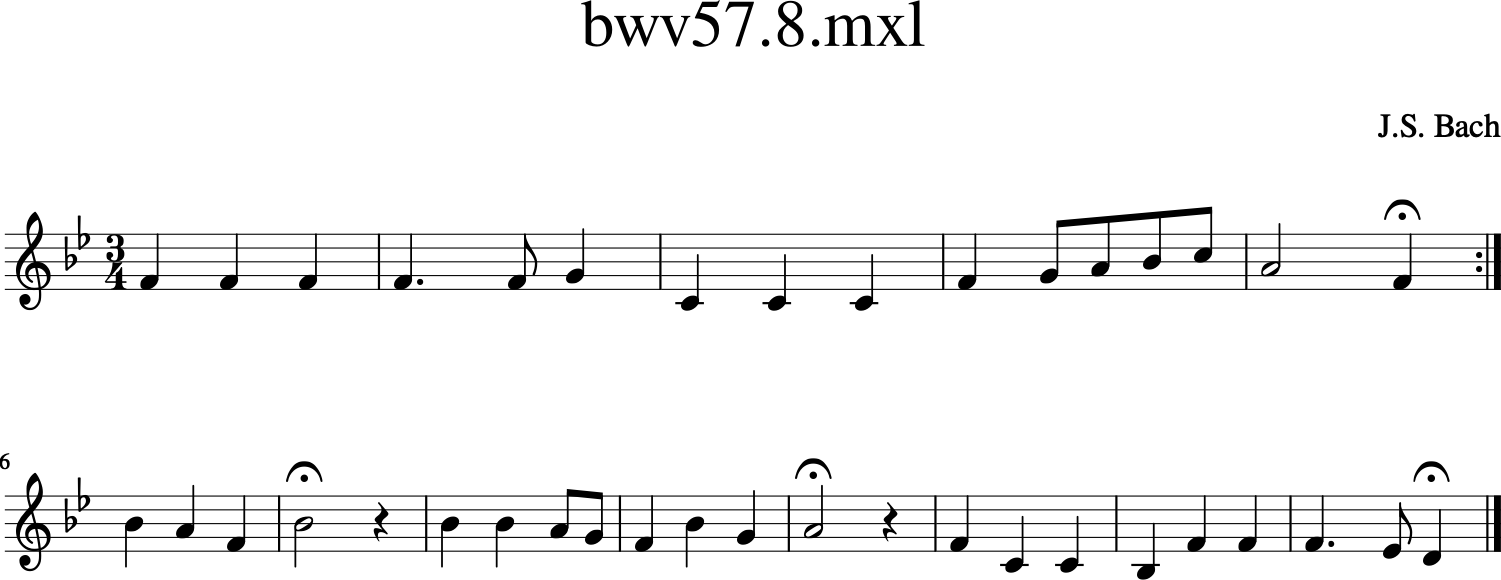

We can load in a piece by specific reference:

myBach = corpus.parse('bach/bwv57.8')

alto = myBach.parts['Alto']

alto.show()

As you might expect, we can also listen to the piece:

#alto.show('midi')

audio_play(alto, "alto1.mid")

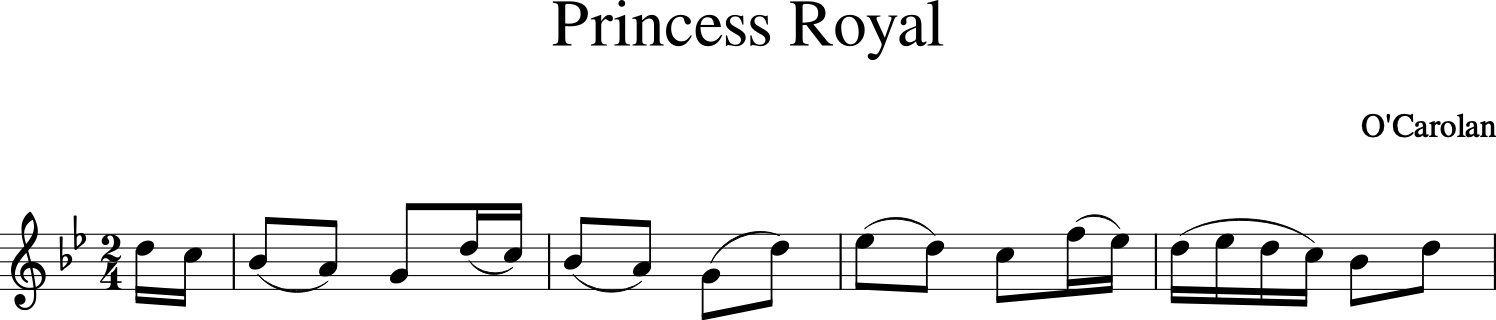

Searching Through Scores¶

We can search through these scores to find a piece of music that we can then use within the notebook.

For example, we can search by composer:

a = [c for c in corpus.search('carolan', 'composer')]

a[0].metadata.all()

[('ambitus',

"AmbitusShort(semitones=17, diatonic='P4', pitchLowest='D4', pitchHighest='G5')"),

('composer', "O'Carolan"),

('keySignatureFirst', '-1'),

('keySignatures', '[-1]'),

('noteCount', '77'),

('number', '647'),

('numberOfParts', '1'),

('pitchHighest', 'G5'),

('pitchLowest', 'D4'),

('quarterLength', '48.5'),

('timeSignatureFirst', '5/8'),

('timeSignatures', "['5/8', '6/8']"),

('title', 'My Dermot')]

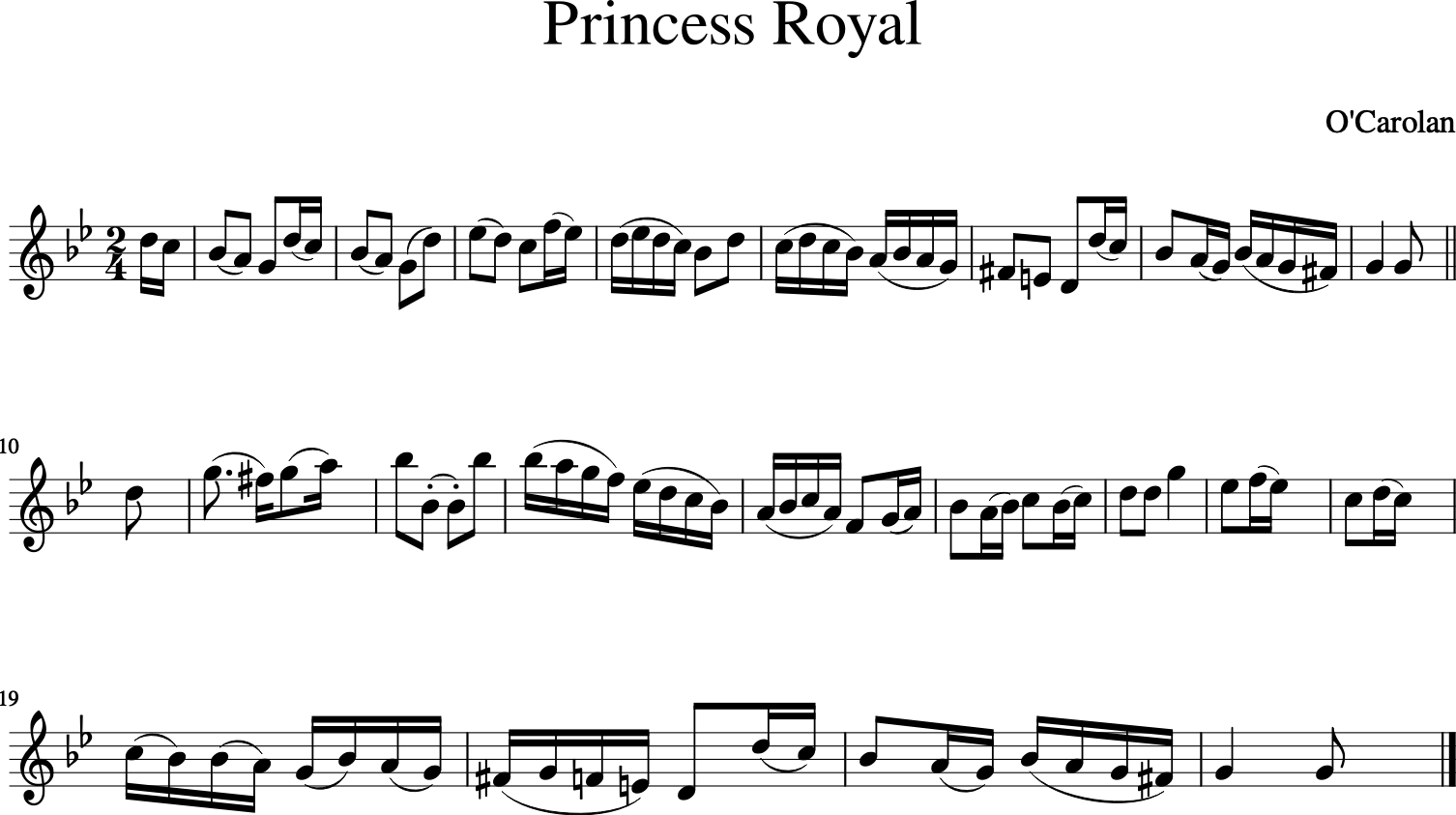

Searches return a metadata bundle, which cas also be search within by chaining the search queries:

c = corpus.search('carolan', 'composer').search('Princess Royal', 'title')

c[0].parse().show()

#c[0].show('midi')

audio_play(c[0].parse(), "part.mid")

Searching Lyrics¶

Many of the pieces of music in the music21 corpus include lyrics. If we have a piece of musing with lyrics, we can search within it for particular search terms.

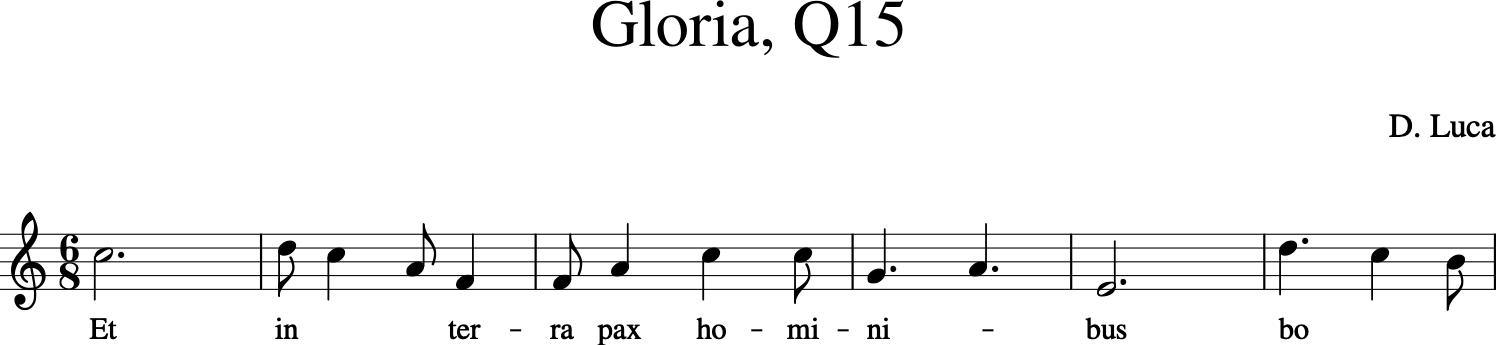

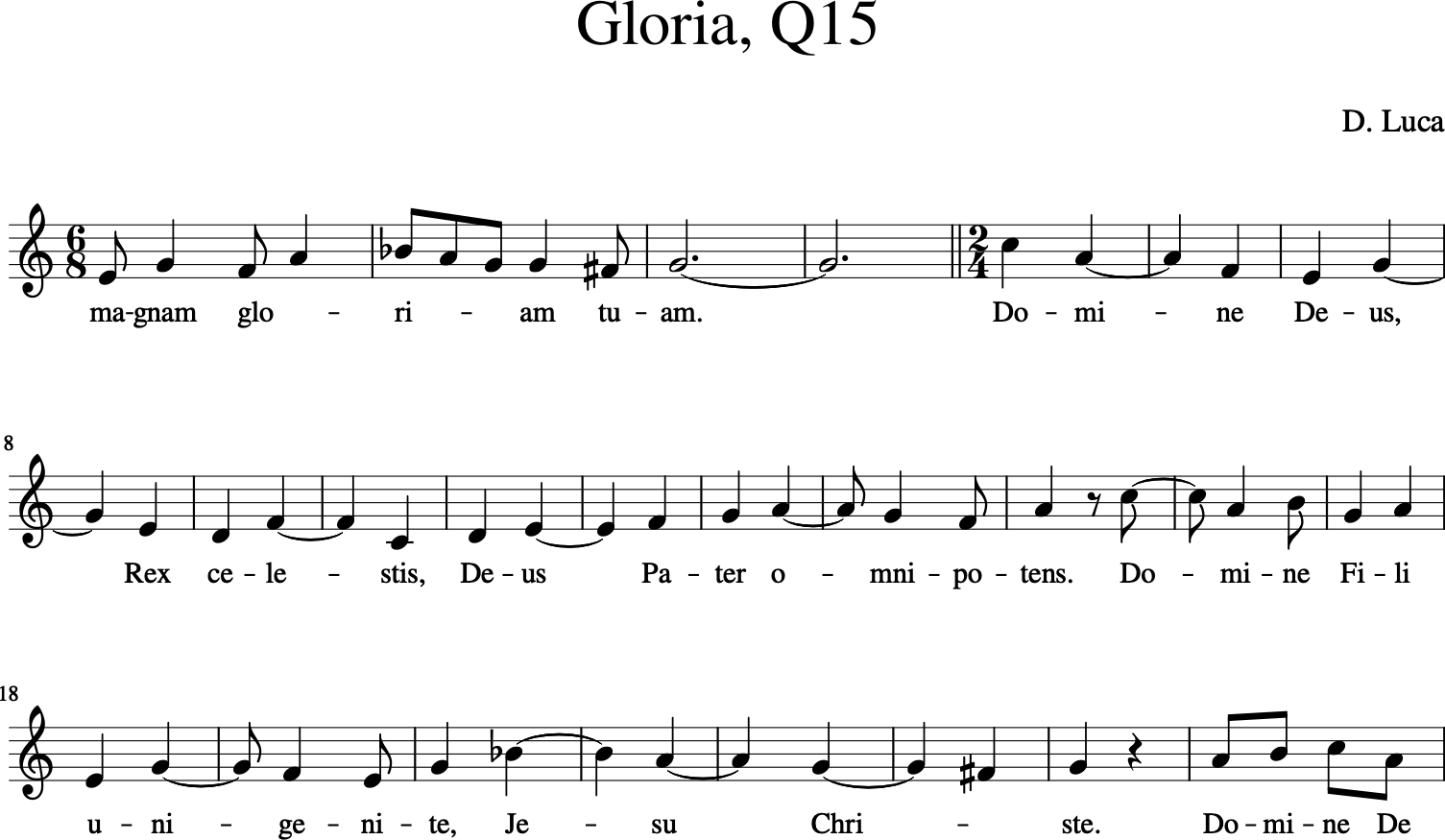

For example, consider the following piece:

#http://web.mit.edu/music21/doc/usersGuide/usersGuide_28_lyricSearcher.html

luca = corpus.parse('luca/gloria')

cantus = luca.parts[0]

cantus.measures(1, 6).show()

We can extract all the lyrics and then search that as we would any text string:

music21.text.assembleLyrics(cantus)

'Et in terra pax hominibus bone voluntatis. Laudamus te. Benedicimus te. Adoramus te. Glorificamus te. Gratias agimus tibi propter magnam gloriam tuam. Domine Deus, Rex celestis, Deus Pater omnipotens. Domine Fili unigenite, Jesu Christe. Domine Deus, Agnus Dei, Filius Patris. Qui tollis peccata mundi, miserere nobis. Qui tollis peccata mundi, suscipe deprecationem nostram. Qui sedes ad dexteram Patris, miserere nobis. Quoniam tu solus Sanctus. Tu solus Dominus. Tu solus Altissimus, Jesu Christe. Cum Sancto Spiritu in gloria Dei Patris. Amen.'

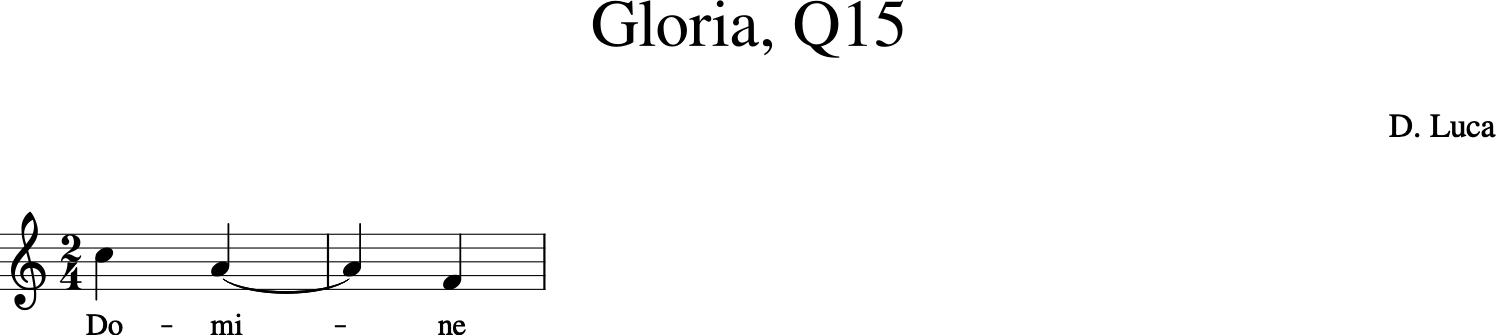

Or we can search the music and retrieve the location by measure:

lyric_search = search.lyrics.LyricSearcher(cantus)

domineResults = lyric_search.search("Domine")

domineResults

[SearchMatch(mStart=28, mEnd=29, matchText='Domine', els=(<music21.note.Note C>, <music21.note.Note A>, <music21.note.Note F>), indices=[...], identifier=1),

SearchMatch(mStart=38, mEnd=39, matchText='Domine', els=(<music21.note.Note C>, <music21.note.Note A>, <music21.note.Note B>), indices=[...], identifier=1),

SearchMatch(mStart=48, mEnd=48, matchText='Domine', els=(<music21.note.Note A>, <music21.note.Note B>, <music21.note.Note C>), indices=[...], identifier=1)]

From the response, we see measure ranges associated with the occurrence of the reconstructed search term (as you will see in the music, the search term is represented there split out over multiple syllables):

cantus.measures(domineResults[0].mStart, domineResults[0].mEnd).show()

Let’s look over the full range of the results:

cantus.measures(24, 48).show()

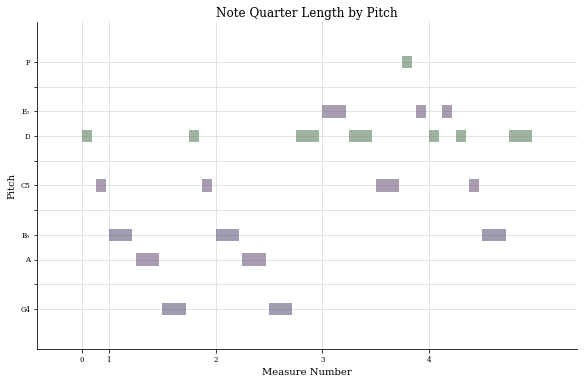

Viewing Part of a Piece of Music¶

When discussing a particular piece, we may want to focus on particular parts of it. We can reference into particular measures and just focus on those. For example, suppose we want to just inspect the opening measures of a piece:

c[0].parse().measures(0,4).show()

Visualising the pitch and duration of notes in the opening:

c[0].parse().measures(0,4).plot()

<music21.graph.plot.HorizontalBarPitchSpaceOffset for <music21.stream.Score 0x1270b5970>>

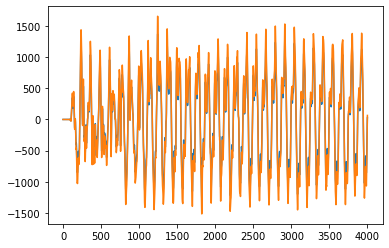

Visualising Audio Files¶

Haiving got an audio file representation, we can then process it as a sound file. For example, we can look at the waveform:

from scipy.io import wavfile

%matplotlib inline

from matplotlib import pyplot as plt

import numpy as np

samplerate, data = wavfile.read('alto.wav')

times = np.arange(len(data)/float(samplerate))

plt.plot(data[:4000]);

abc.js¶

abc.js is a simple notation and Javascript rendered for working with music.

We can use a simple IPython cell block magic to allow us to write and render abc.js scores (only works in live notebook? doesn’t work in Jupyter Book?):

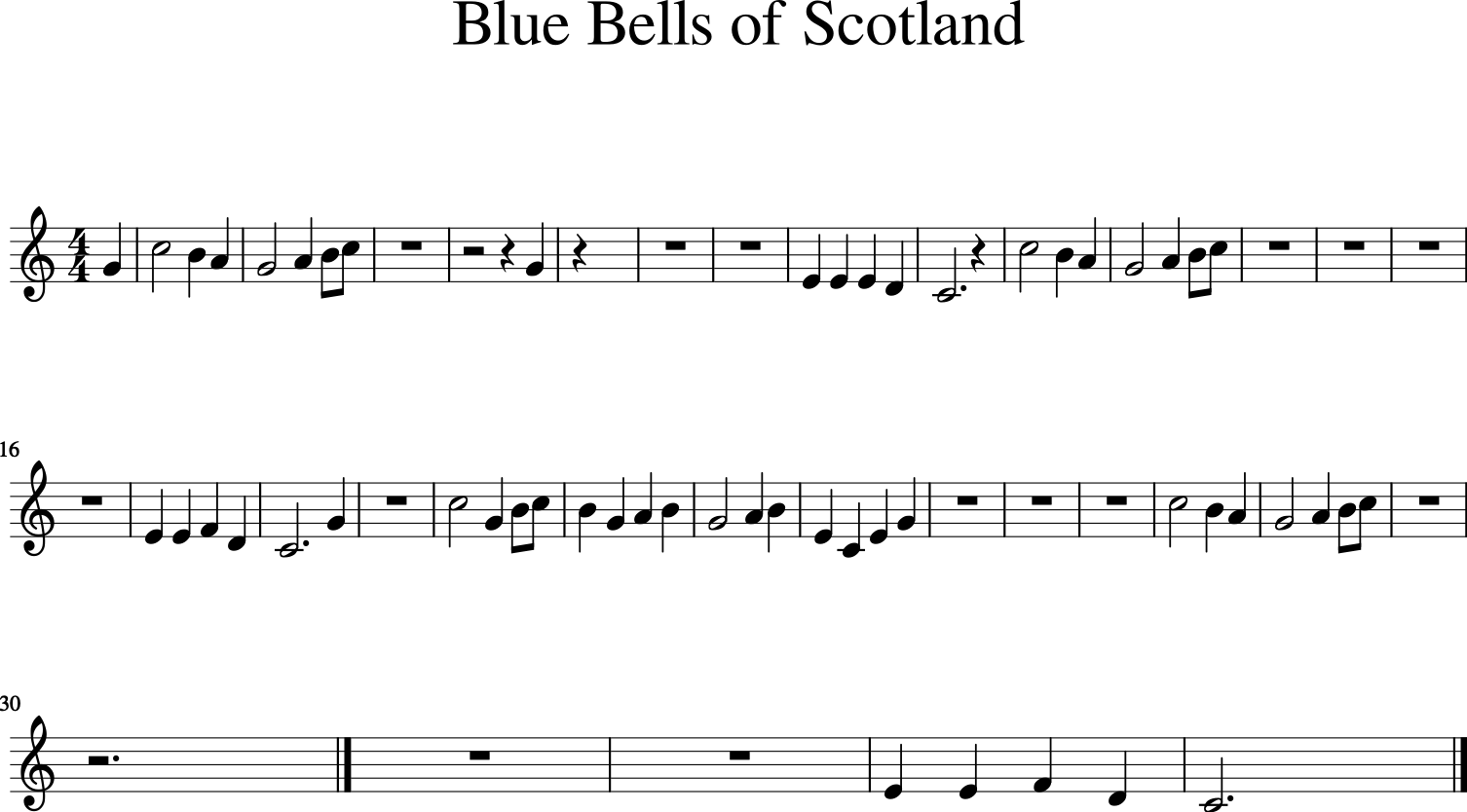

%%abc

%%score (R1 R2) (L)

X: 1

T: Blue Bells of Scotland

M: 4/4

L: 1/8

K: C

V:R

G2|c4B2A2|G4A2Bc|z8|z4z2G2|

V:L

z2|z8|z8|E2E2E2D2|C6z2|

V:R

|c4B2A2|G4A2Bc|z8|z8|

V:L

|z8|z8|E2E2F2D2|C6G2|

V:R

z8|c4G2Bc|B2G2A2B2|G4A2B2|

V:L

E2C2E2G2|z8|z8|z8|

V:R

c4B2A2|G4A2Bc|z8|z6|]

V:L

z8|z8|E2E2F2D2|C6|]

The music21 package is also capable of parsing abcjs notation in a saved file:

abcjs = '''

X: 1

T: Blue Bells of Scotland

M: 4/4

L: 1/8

K: C

V:R

G2|c4B2A2|G4A2Bc|z8|z4z2G2|

V:L

z2|z8|z8|E2E2E2D2|C6z2|

V:R

|c4B2A2|G4A2Bc|z8|z8|

V:L

|z8|z8|E2E2F2D2|C6G2|

V:R

z8|c4G2Bc|B2G2A2B2|G4A2B2|

V:L

E2C2E2G2|z8|z8|z8|

V:R

c4B2A2|G4A2Bc|z8|z6|]

V:L

z8|z8|E2E2F2D2|C6|]

'''

abc_fn = 'demo.abc'

with open(abc_fn, 'w') as outfile:

outfile.write(abcjs)

Simply use the music21.converter.parse() function with the file name to create a music21 object that we can use in the normal way (viewing the sheet music, creating an embedded audio version, etc.):

converter.parse(abc_fn).show()